Seeing Double: What You Need to Know about Duplicate Content

From an Organic Search perspective, checking for duplicate content is part of any thorough website analysis. It’s an incredibly common issue. Having worked in SEO for over a decade, I’ve performed countless site audits, and duplicate content showed up as an issue in nearly every one of them.

But what is duplicate content? Why is it bad? And what can you do about it?

Here are the basics on duplicate content, along with a few pointers on how to take care of it.

So What Is Duplicate Content?

On-site duplicate content is usually the result of a technical issue occurring on the back end of your site. Through a number of different avenues, two or more versions of the exact same webpage are created, each with their own distinct URL.

Off-site duplicate content is a little more devious. This typically occurs when you write a particularly newsworthy or useful piece of content, and someone else reposts it to their site. If you haven’t planned ahead, the copycat may be viewed as the originator instead of your site.

Now, there’s nothing really wrong with duplicate content. It’s just redundant. To a website visitor, nothing will appear erroneous at all. But to search engines, well, that’s a different matter entirely.

How Bad Is It?

When discussing duplicate content from the perspective of search engines, people tend to be overly frightened of its repercussions. Many assume there’s some type of Google penalty for duplicate pages, or that they count as spam content. Neither of these are true.

The real problem that search engines have with duplicate content is that it creates confusion. Google is trying to index the web and serve up the most relevant content for a given query. It’s a little hard to do that when two pages are exactly the same except for their URLs.

Without any outside indication, Google won’t know which of two (or more) dupes to include in its index, to attribute accrued link signals to, or to rank for specific search results. For site owners, this can mean lost opportunities for keyword rankings and Organic traffic. It also means that your link building efforts may be diluted by multiple URL versions rather than just one target. The end result of all this is reduced visibility for your content.

Don’t worry. It happens to the best of sites.

Dupes Happen

Content duplication can occur for many reasons. Here are a few of the causes I come across most frequently:

1. WWW vs. Non-WWW URLs

This is a common form of site-wide duplication, which occurs when a webmaster fails to plan for presentation of its “www.” URL structure. It means there is one instance of the site at https://www.example.com and a complete duplicate of it at https://example.com.

2. HTTP vs. HTTPS

When switching to a secure version of your site, sometimes the HTTP version winds up still active by mistake.

3. Trailing Slash vs. Non-trailing Slash

Another site-wide duplicator, this occurs when a website has a 200-code page for both of these permutations: https://www.example.com/directory and https://www.example.com/directory/

4. Trailing Slash vs. File Extension

This can occur either site-wide or page-specifically. Here’s what this type of duplication looks like: https://www.example.com/page/ and https://www.example.com/page.html. Any type of file extension – .php., .aspx, etc. – can cause this.

5. Duplicate URLs with Parameters

This type of duplicate content is page-specific and comes in a few different flavors. Here’s the most common:

- Paid campaign duplicates, which can also be complicated by multiple parameter orders creating even more dupes. Example: https://www.example.com/directory/?utm_source=google&utm_medium=cpc&utm_campaign=Test&utm_content=TestContent

- Pagination duplicates, typically for blog content. Example: https://www.example.com/blog/?page=2

- Printer-friendly parameters, creating a version of a page that’s cleaner for printing. Example: https://www.example.com/page?q=print

6. Printer-friendly Directories

This is a directory version of printer-friendly parameter duplication, e.g., https://www.example.com/print/page, that calls up a printer-friendly CSS template.

7. Domain vs. .com/index.html

A website’s domain pulls its content from an index file of some sort. This type of duplicate content occurs when that index file also appears in the wild.

8. Additional Intermediate Directory

This occurs when the CMS throws a page into some unnecessary intermediate directory tree in addition to the normal pathway: https://www.example.com/extra-directory/directory/ and https://www.example.com/directory/

9. Duplicated Product Information

Sometimes ecommerce websites assign a product to two or more categories, which creates more than one URL version.

10. Copied or Syndicated Content

I’ve saved the best for last: This type of content duplication is different from those listed above in that it occurs off-site, which places this in the realm of intentional duplication. Someone copied your text verbatim, and it’s now out of your control (well, not entirely, but we’ll get to that).

Syndicated content is the most benign form of off-site duplicate, where your article or content is circulated across multiple websites. This happens often with press releases and news articles.

The more egregious form of off-site duplication occurs when an outside website scrapes your content and reposts it on their domain – often without crediting you! Sometimes this is done through automation, and in other cases it’s the result of theft by a particularly lazy webmaster.

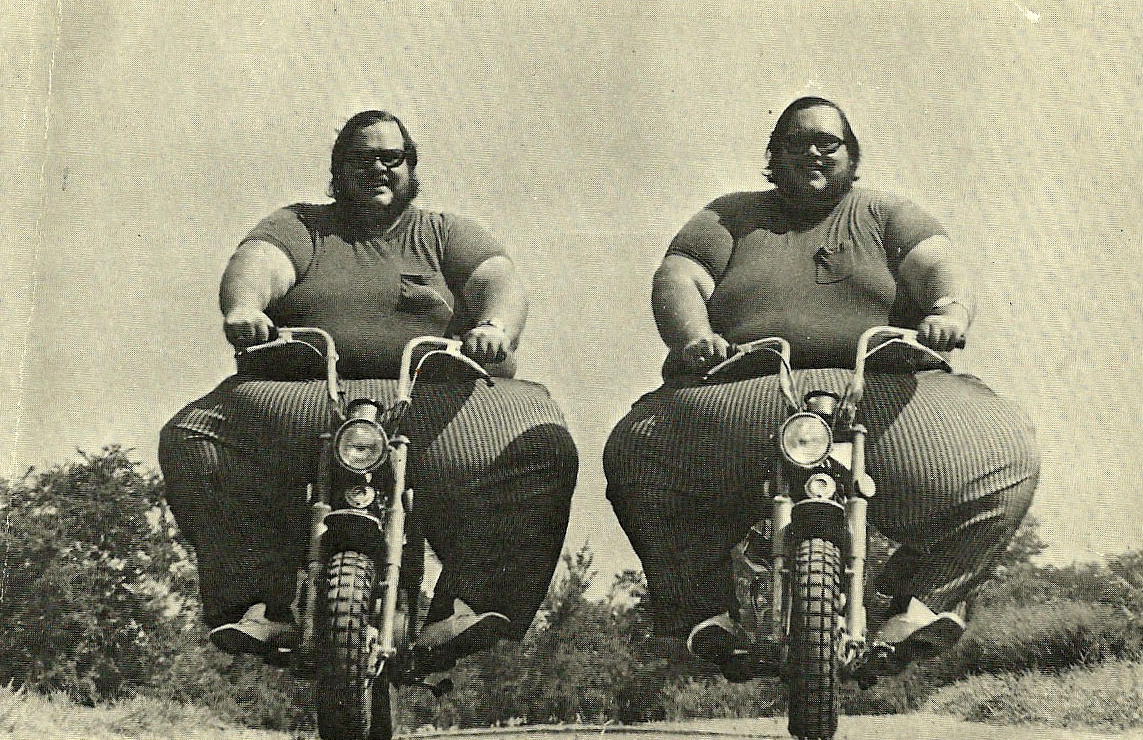

And Duplicates Multiply!

Duplicate content issues tend to interact with each other, compounding the problem by several magnitudes. I once performed an audit in which a site had three site-wide duplication issues, which resulted in 12 distinct duplicate versions of the entire website.

Thankfully, duplicate content is usually fairly straightforward to remediate once you know what needs fixing.

Dealing with Duplicates

So now that you know what to look for, how do we find and fix these duplicate content problems?

Let’s start with some analysis.

Tools of the Trade

- The Screaming Frog SEO Spider Tool is excellent for this task as it allows you to crawl your website and filter for duplicates.

- I like to run Raven Tools’s Site Auditor as part of my initial technical analysis and find a few telling duplicate content signals that way.

- You should also check the HTML Improvements section (under Search Appearance) in your Google Search Console This can indicate pages with duplicate Titles or Meta Descriptions, a possible sign of content duplication.

- Beyond that, running a site command in Google can show you the indexed URLs so that you can identify problematic ones.

How to Fix It

Depending on the types of duplicate content you’ve unearthed, there are a few different solutions that may apply. Here are some common approaches:

Canonical Tags

Canonical tags should be your first line of defense, and I’d recommend putting them in place on every website. This is how you can hedge against off-site copycats and be recognized as the content’s originator.

A canonical tag is a single line of code that lives in the <head> section of your webpage’s HTML. It indicates your preferred version of the webpage’s URL. Example:

<link rel="canonical" href="https://arcintermedia.com/" />

These tags tell Google to consolidate all link signals to this preferred URL, provided that the canonical tag appears on all duplicate iterations. Make sure that if you’re setting up canonical tagging dynamically through WordPress or any other CMS that you don’t just have canonicals default to whatever URL is being displayed. If you tell Google that everything is canonical, then nothing is canonical.

301 Redirects

The 301 Redirect is a server-side approach to solving duplicate content issues. For this, you’ll need to write some rules for your server with respect to handling various URL patterns, and you may need to update your .htaccess file depending on the type of server you use.

A 301 redirect in essence tells the server that a page has moved permanently, and if someone inputs the old URL it sends them to the new URL. This type of redirection is incredibly useful for dealing with site-wide content duplication such as WWW vs. Non-WWW and also helps to address 404 error pages. I recommend mapping the URLs you’re looking to redirect to their target pages first. This will make it easier to write the redirects later.

For those operating less involved sites, WordPress has some useful plugins for 301 redirection as well. In that case you might not need to get your hands dirty writing redirect rules.

Google Search Console URL Parameters

Google Search Console can be a major help when dealing with parameter-based content duplication. Under the Crawl menu of Google Search Console, you’ll find the URL Parameters section. Here you can set up how Google handles various parameters, which can enable you to quickly knock out Pagination Duplicates.

Keep in mind that the URL Parameters feature only addresses parameter duplication issues in Google specifically.

Robots.txt

Your robots.txt file is the yin to your XML Sitemap’s yang. While the XML Sitemap serves as a feed of content you’d like to see indexed, the robots.txt file is a simple plain-text document that states what should be disallowed from indexation.

The robots.txt can be used to disallow indexation of duplicate intermediate directories, specific parameters, and can prevent any number of duplicate content types from indexation (provided you craft the right wildcard syntax). Moz has a pretty good Robots.txt Cheat Sheet to help you get started.

There Can Be Only One (URL)

While there is certainly a lot of technical jargon involved, fixing content duplication problems really doesn’t need to be a major headache. The hardest part is identifying the duplicates themselves. From there, the solutions become somewhat repetitive. You whittle down the dupes until only one URL path remains.

In the same way that SEO works best when it’s part of the initial website build, duplicate content problems are best addressed by prevention upfront. When building a new website, take a moment to consider URL construction, and test for some of the common types of content duplication on your staging site.

And after launching a new website, be sure to perform a full technical audit right away. It’s far easier to solve duplication issues when the site is small and new than after publishing your thousandth article. Save yourself the forensic work, and plan ahead.